CollaQ¶

Overview¶

CollaQ (Zhang et al. 2020), Collaborative Q-learning, is a multi-agent collaboration approach based on Q-learning, which formulates multi-agent collaboration as a joint optimization problem on reward assignments. CollaQ decomposes decentralized Q value functions of individual agents into two terms, the self-term that only relies on the agent’s own state, and the interactive term that is related to states of nearby agents. CollaQ jointly trains using regular DQN, regulated with a Multi-Agent Reward Attribution (MARA) loss.

Quick Facts¶

CollaQ is a model-free and value-based multi-agent RL approach.

CollaQ only supports discrete action spaces.

CollaQ is an off-policy algorithm.

CollaQ considers a partially observable scenario in which each agent only obtains individual observations.

CollaQ uses DRQN architecture for individual Q learning.

Compared to QMIX and VDN, CollaQ doesn’t need a centralized Q function, which expands the individual Q-function for each agent with reward assignment depending on the joint state.

Key Equations or Key Graphs¶

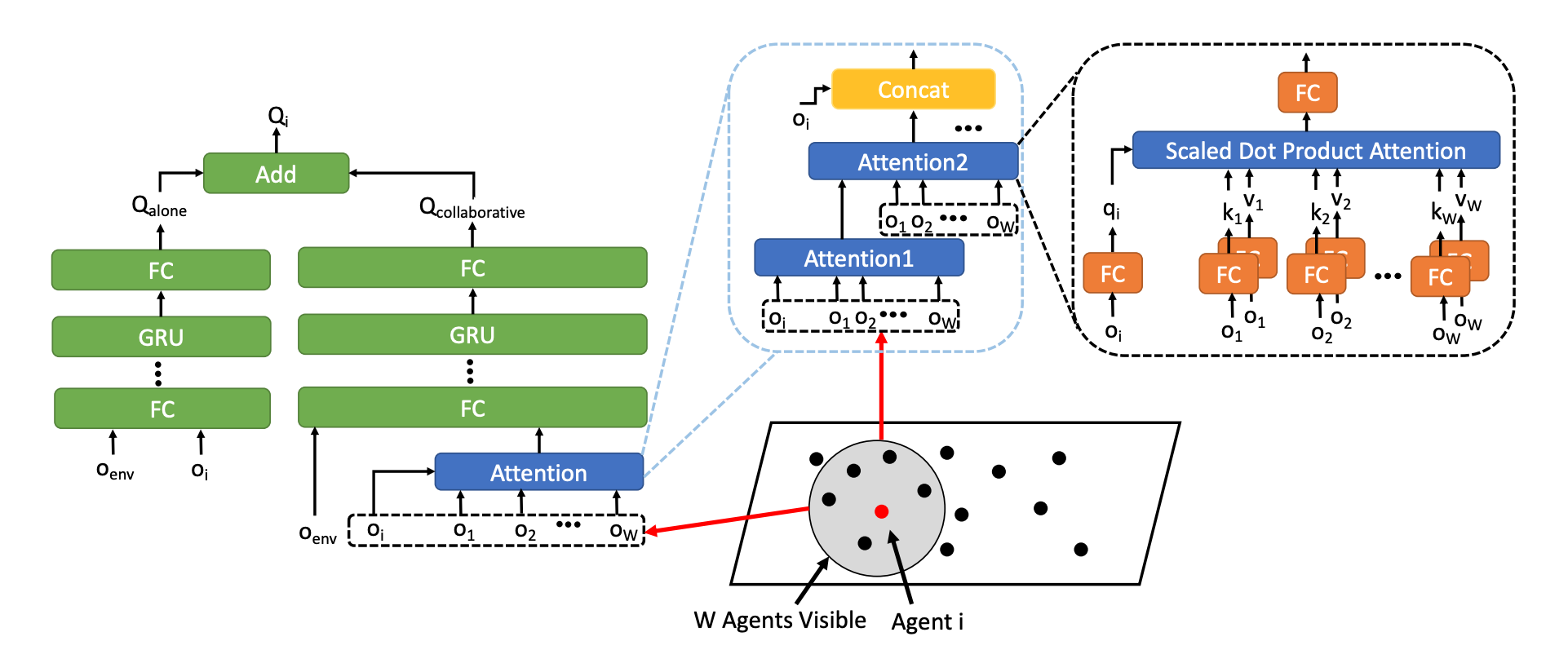

The overall architecture of the Q-function with attention-based model in CollaQ:

The Q-function for agent i:

The overall training objective of standard DQN training with MARA loss:

Extensions¶

CollaQ can choose wether to use an attention-based architecture or not. Because the observation can be spatially large and covers agents whose states do not contribute much to a certain agent policy. In details, CollaQ uses a transformer architecture (stacking multiple layers of attention modules), which empirically helps improve the performance in multi-agent tasks.

Implementations¶

The default config is defined as follows:

The network interface CollaQ used is defined as follows:

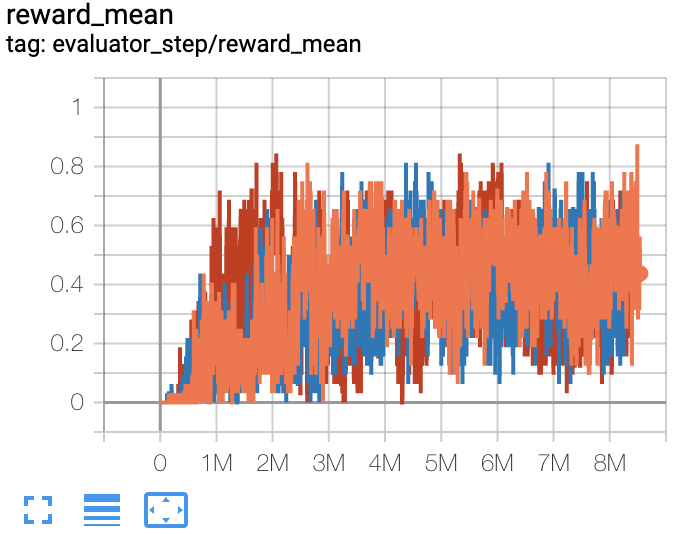

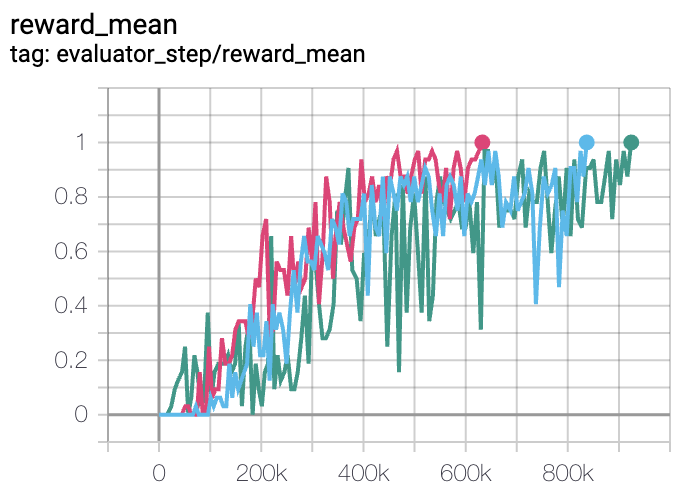

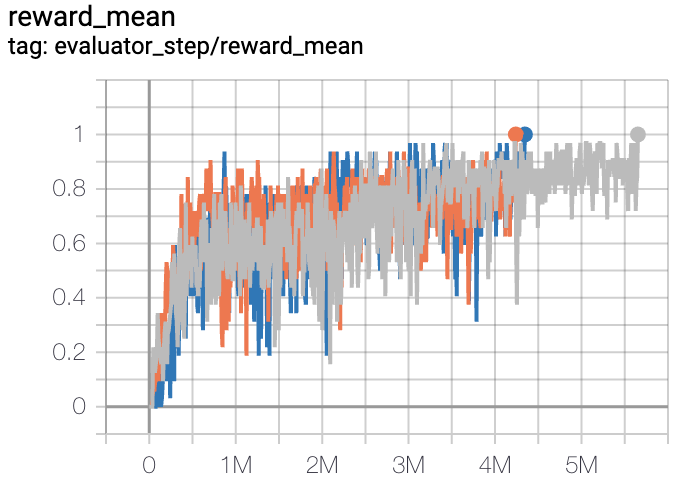

The Benchmark result of CollaQ in SMAC (Samvelyan et al. 2019), for StarCraft micromanagement problems, implemented in DI-engine is shown.

Benchmark¶

Environment |

Best mean reward |

Evaluation results |

Config link |

Comparison |

|---|---|---|---|---|

5m6m |

1 |

|

Pymarl(0.8) |

|

MMM |

0.7 |

|

Pymarl(1) |

|

3s5z |

1 |

|

Pymarl(1) |

P.S.:

The above results are obtained by running the same configuration on three different random seeds (0, 1, 2).

References¶

Tianjun Zhang, Huazhe Xu, Xiaolong Wang, Yi Wu, Kurt Keutzer, Joseph E. Gonzalez, Yuandong Tian. Multi-Agent Collaboration via Reward Attribution Decomposition. arXiv preprint arXiv:2010.08531, 2020.

Mikayel Samvelyan, Tabish Rashid, Christian Schroeder de Witt, Gregory Farquhar, Nantas Nardelli, Tim G. J. Rudner, Chia-Man Hung, Philip H. S. Torr, Jakob Foerster, Shimon Whiteson. The StarCraft Multi-Agent Challenge. arXiv preprint arXiv:1902.04043, 2019.